Awhile ago one of my colleges handed me the above picture. It was funny, so I put it up on my wall. I could see how this situation could happen, but couldn’t recall any good example in my past experience.

Usually a bug in a software is fairly obvious. If there is a debate among the stakeholders and the developers, it would properly be a design problem more than a software bug.

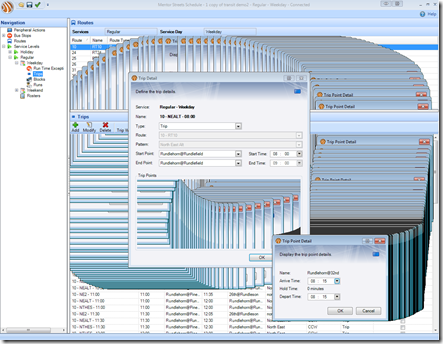

During the last sprint of the release, my team has debated a side effect that was introduced due to an UI architecture change. (I am guilty of making such change) We joked about it and believed that we could sell this bug as a FEATURE, and we named this feature “Instance Preview”. Similar to the format preview feature in MS Word 2007, user can see how the text formatting change simply by hovering your mouse over the tool bar items.

Background Story

A couple of years ago, our development team came up with a new UI architecture design that manages how data are modified in the application. It allows users cancel their changes at multiple sub form (windows) level. The application was tightly coupled to a typed DataSet. The dataset acts as a in-memory database for the application to manipulate data. Most of the UI controls are bind to the DataSet though data binding.

So we know we need some sort of versioning to keep track of data changes. The DataSet has a row version feature, but this feature wasn’t well understood by the development team back then. Therefore we implemented our own way. We called it the FormTransaction. Each FormTransaction object contains a DataSet, and has a reference to a parent FormTransaction. Whenever user drills down the data detail, a sub window is opened, a form transaction object is also created, which contains a subset of data from the main data set.

The Main DataSet is the application main data repository. The forms are data bind to their FormTransaction’s DataSet. When a user makes a change in the UI, data are immediately updated in the DataSet. If another form updated the same DataSet, the changes would also immediately shown on other binded UI. If user want to discard the change, they simply click on a Cancel button on the form, and the sub dataset are disposed. If user want the save the change, the data are merge to the parent dataset, until all the changes reach to the Main DataSet.

FormTransaction Advantage:

- Cancel change implement is easy, you simply close the form and dispose the FormTransaction object

- You can control what data are loaded to the sub DataSet, which also acts as a filter

FormTransaction Disadvantage:

- DataSet constraint validation can only run when data are merge back to the Main DataSet, this makes debugging more challenging because you won’t know which form created the invalid data until data are merged back to the Main DataSet..

- Increase memory usage. If you need to load a lot of data in the subform, you need to duplicate the rows

- It takes time to load data from Main DataSet to the sub DataSet, and it also take time to merge data changes to the parent DataSet.

Over the past year, we notices that our application is getting slower because we need to handle more and more data. The DataSet definition has grown and more data are needed to do certain calculation. FormTransaction were slowing down the application.

In addition, there was another feature that we need to do but it requires a lot of data to be loaded to a sub form. This UI architecture simply does not scale at all. Thus, we came up with a newer approach using the opposite idea of the FormTransaction. We call it the RollbackTransaction

RollbackTransaction Advantage

- No memory duplication, all the form works on the Main DataSet.

- No need to load data, or figure out what data you need in the sub form, and no need to merge.

- You have access to all the data in your sub form all the time.

- Changes that made by the user are immediately validated

RollbackTransaction Disadvantage

- Need to implement data filtering on all the data bind controls, since we don’t use FormTransaction to filter data.

- If user made a lot of changes, undo (rollback) the changes can be slow

The implementation of the RollbackTransaction is pretty trivial. You basically backup all the original values that were changing. The DataTable comes with the following events:

You can attach to these events and store data change into a queue. When the user wants to undo the change, reply the the action in an undo fashion. (i.e. if a new row was added, delete it)

Side Effects

Rollback transaction was great, it speeded up the application performance. However, there was a minor side effect. Since all the forms that uses RollbackTransaction are bind to the same DataSet, any changes you made in the child form will immediately show in the parent form. This side effect looks really cool, because you can see how the parent form behaves as you modify the child form. Especially if the parent form has a sorted grid, as you change the name of the sorted column value in the child form, the grid will resort itself as you type.

Had we Break the User Experience UX?

If you take a closer look at the our application design (above), you will notice there is a OK button and a Cancel button. The major conflict introduced by this side effect is that without user clicking on the OK button, the parent form are being updated as user making the change. The good news is that these windows are modal, which means user can’t edit the data from multiple windows. Most of the time user may not even see the parent form is updating because it is covered by the child form.

With many tasks on hands, our team left this minor side effect and continue on other developments.

Trying to Fix the Side Effect

To fix the issue, our idea is to update the DataSet, but delay the parent form update even it is data bind to the same DataSet. We know that we can’t just unblind the form because the form would simply show no data. It would look silly, and it is worst than the side effect.

There has to be a way to suspend the datablinding. The gird is the first UI control that we want to suspend the data binding. We use Infragistic UltraGrid. UltraGrid had many functionalities and I was hopeful that it would have a method where I can suspend the data binding, and resume it which causes the gird to refresh itself.

We tried a number of solutions:

- UltraGrid.BeginUpdate() – This method looked very promising. In fact, it was the first solution that I implemented. It actually stop UltraGrid from painting itself, and I got what I expected, until the a QA come to me with the following issue:

So, what’s the going on, why would the QA’s machine behaves differently than my machine. Well there is a big different, I was running Windows 7 with Aero Theme, while the QA was running Windows XP with Classic Theme.

Aero Theme works most likely due to Windows secretly take a screen capture of the windows, this is need to create the Aero glass-liked effect on the title bar. When UltraGrid.BeginUpdate() is called, it stops the grid from painting, but Aero Theme override the painting somehow. In XP or Win 7 Classic Theme, it doesn’t do that. As a result you get the above issue.

- UltraDataSource.SuspendBindingNotifications() – This method also look promising. Unfortunately we don’t use UltraDataSource, since the gird bind directly to the DataSet.

- Create a data binding proxy – Using a proxy, we have more control on what data go into the UI. However, such implementation can get complicated, and this really the same concept as the Form Transaction.

- Override the CurrencyManager – Totally a wrong concept, CurrentManager can’t control when the UI updates, its purpose is to synchronize record navigation with different controls.

- Use a picture box to mask the underlying changes – I saw this idea on a forum. I thought it was funny, so I mentioned this idea to the team, and we concluded that there should be a better solution. The limitation using a picture box is that you can’t interact with the grid at all. However, in our situation, we use modal window, so it may work.

Why Don’t We Just Sell the Bug as a Feature!

Failing to find a good solution to fix this side effect, or a bug, the development team (which include myself) tried to convince ourselves that this bug is really an awesome feature. Existing customers may find it odd, but its functionality still works, new customers probably wouldn’t care, all we need to do is sell this bug as a feature to the QA team and other stakeholders: We call it “Instance Preview”.

Instance Preview – Similar to MS Word preview feature when applying format change, user can immediately see data change as they make modification to the detail form before changes are committed.

I bet that QA team won’t even know that it is a bug! We can market this as a new production feature. I think this is just evil genius. It saves developers time from fixing the problem while we have a new feature.

Solution

Of course, who are we kidding with. No customers ever ask for such feature, and adding feature that doesn’t have a demand and proper requirement would create more maintenance problem down the road. So I contacted to Infragistics and hope they could provide a better solution:

http://forums.infragistics.com/forums/p/60424/306715.aspx#306715

And the solution is… (drum rolling)… picture box!!!

Here is a code snippet:

1: Size s = ultraGrid1.Size; 2: Bitmap memoryImage = new Bitmap(s.Width, s.Height);

3: 4: grid.DrawToBitmap(memoryImage,new Rectangle(grid.Location, grid.Size));

5: PictureBox p = new PictureBox();

6: p.Name = "CoverGrid";

7: p.Image = memoryImage; 8: p.Location = grid.Location; 9: p.Size = s; 10: this.Controls.Add(p);

11: p.BringToFront(); 12: ultraGrid1.BeginUpdate();The code turns out to be more simple than what I was expecting. Originally I was about how the PictureBox behave if it is masked over the original windows. Would the picture box follows the gird if user move the window around. As you can see, because the PictureBox.Location is a Point struct, and pictureBox.Size is a Size struct. These are reference type and as a result the PictureBox would simply be the same as the grid control’s location and size.

Ultimately, our team call these technique “PictureBoxing”.

This technique not only works for the grid, but also some other controls in our application.

Others Bug-Liked Features or Feature-Liked Bugs

- Incorrect decimal rounding due to unclear specification

- Duplicate data begin generate and you told your customer this increase reliability

- Click and hold on a Windows 7 title bar and shake it that minimize other windows

- Shaking your iOS device and it pops up “There is nothing to undo”

- Logon screen that ask you if you want to save your password, and yet you have to retype your password the next time your run the application. Reason: The saved password only used during the session to reduce multiple logons, and the application never persist the password.

- Calculation appears to be incorrect because it was displaying in metric while users expected the value is in empirical.

I am sure there are properly many similar situations which a bug and a feature is merely a different perceptive among different people.